Full Body 3D Scanning System

A comprehensive photogrammetry pipeline for high-quality 3D human digitization (Dopl)

A comprehensive photogrammetry pipeline for high-quality 3D human digitization (Dopl)

In 2020, the challenge was posed to develop a commercial-grade 3D scanning system that would simultaneously satisfy three critical requirements: exceptional scan quality, operational robustness, and user accessibility. The project scope encompassed both hardware architecture and complete digital asset processing pipeline development.

The research and development timeline was constrained to a 10-month period, necessitating efficient methodology and rapid iteration cycles. The deliverable requirements specified a production-ready full 3D capture system with end-to-end processing capabilities—from raw image acquisition through final textured mesh generation—suitable for immediate commercial deployment.

Hardware reliability and operational stability for continuous commercial use

Sub-millimeter geometric accuracy and photorealistic texture reproduction

Intuitive operation requiring minimal technical expertise from operators

Accelerated R&D cycle from concept to production-ready system

This research presents a comprehensive photogrammetry-based system for full-body 3D digitization, developed through a 10-month research and development initiative that successfully delivered both hardware architecture and complete digital asset processing pipeline. The methodology employed a simulation-first approach, wherein extensive virtual testing preceded hardware development, optimizing camera placement algorithms and photogrammetric reconstruction parameters prior to physical implementation.

The resulting architecture comprises an 87-camera DSLR array with hardware-synchronized capture capabilities, deployed across five operational systems that have processed tens of thousands of subjects. The system demonstrates production-scale viability for applications in computer graphics, virtual reality, biometric analysis, and digital anthropology. This work contributes a validated framework for transitioning photogrammetry research from virtual simulation to large-scale commercial deployment within constrained development timelines.

The research methodology prioritized computational simulation as a risk mitigation strategy for hardware investment. A virtual scanning environment was constructed to model the complete photogrammetric capture system, including camera pose estimation, optical characteristics, lighting conditions, and subject positioning parameters. This digital twin approach enabled parametric optimization across thousands of configuration permutations.

The simulation framework evaluated critical variables including camera quantity, spatial distribution geometry, baseline distances, and overlap ratios. Monte Carlo methods were employed to assess robustness under varying subject poses and environmental conditions. This computational validation phase significantly reduced development cycle time and capital expenditure while ensuring the physical system would achieve target performance specifications upon deployment.

Following virtual validation, system specifications were formalized into engineering documentation for manufacturer collaboration. The transition from simulation to physical hardware involved iterative refinement of mechanical design, electrical synchronization systems, and calibration procedures. Five complete scanner units were manufactured through this collaborative development process, with each iteration incorporating empirical findings from operational testing.

Production units integrated custom triggering hardware for microsecond-precision synchronization across the 87-camera array, automated calibration routines for geometric accuracy maintenance, and optimized data acquisition pipelines for high-throughput operation. The manufacturing phase validated the scalability and reproducibility of the virtual design parameters in physical deployments.

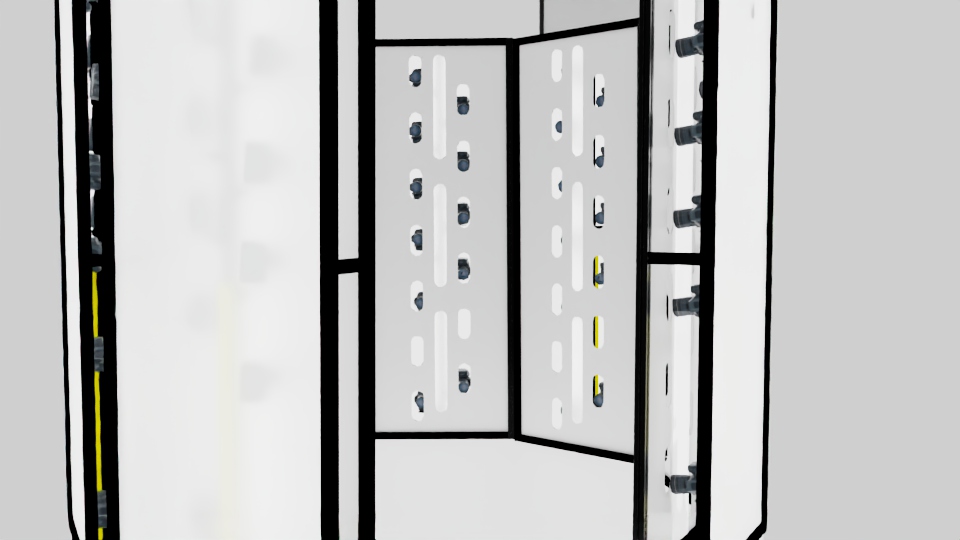

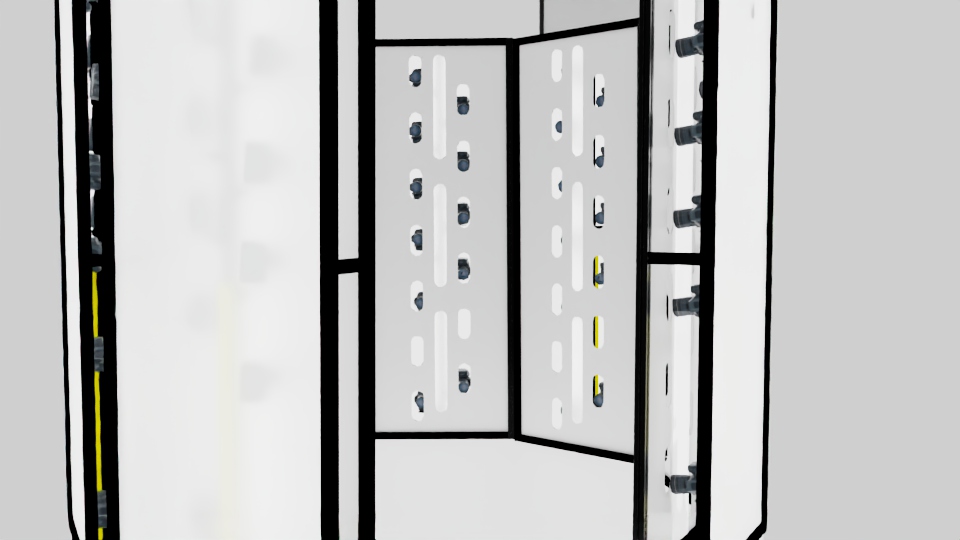

The photogrammetric capture system employs an 87-camera DSLR array arranged in a geodesic-inspired spherical configuration to maximize surface coverage while minimizing occlusion artifacts. Camera placement follows an optimized distribution pattern derived from virtual simulation, ensuring adequate baseline distances for stereoscopic reconstruction while maintaining sufficient image overlap for robust feature matching.

Synchronization is achieved through custom hardware triggering mechanisms implemented via Raspberry Pi microcontrollers, enabling simultaneous exposure across all sensor units with sub-millisecond precision. This temporal alignment is critical for capturing static poses of human subjects without motion blur artifacts that would compromise reconstruction accuracy. The system incorporates automated camera calibration protocols to maintain geometric precision throughout operational lifecycles.

Full 360° spherical coverage with optimized camera placement

Simultaneous capture trigger across all cameras

Geometric precision system for accurate reconstruction

Controlled illumination rig for consistent results

The photogrammetric reconstruction pipeline processes multi-view imagery through sequential algorithmic stages, transforming raw sensor data into topologically consistent 3D mesh representations with photorealistic texture mapping. The computational workflow integrates classical computer vision techniques with modern optimization frameworks.

Synchronized acquisition

Keypoint detection

Point cloud generation

Surface reconstruction

Color projection

Final optimization

The system architecture integrates Reality Capture as the primary photogrammetric reconstruction engine, supplemented by custom algorithmic implementations in Python and Java for pipeline automation and data management. Hardware control systems utilize Raspberry Pi microcontrollers for camera synchronization and triggering protocols. Computational bottlenecks are addressed through GPU acceleration using CUDA frameworks, enabling near-real-time processing of high-resolution multi-view datasets. The software stack emphasizes modularity and scalability to accommodate future algorithmic enhancements and deployment scenarios.

Operational data from large-scale deployment provided insights into calibration drift patterns, processing throughput optimization strategies, and failure mode analysis. Each capture session contributed to the refinement of automated quality assurance protocols and data validation procedures. The statistical robustness achieved across thousands of acquisitions demonstrates the commercial viability of photogrammetry-based human digitization at production scale.

The scanning systems have been deployed across diverse industry verticals, supporting high-profile productions and enterprise applications. These collaborations demonstrate the system's versatility and ability to meet demanding professional requirements across entertainment, gaming, academic, and fashion technology sectors.

These collaborations span entertainment production (Severance Season 2, Jim Henson Company, Apple TV+), music entertainment (Sony Music artist digitization), interactive media (Activision, Riot Games), virtual reality (High Fidelity VR social platform), academic institutions (Harvard Lampoon), and fashion technology (CLO3D virtual fitting software). The system's adaptability to varied workflow requirements—from traditional puppetry studios to cutting-edge game development, virtual reality avatars, and virtual performance applications—validates its design philosophy of balancing technical excellence with operational flexibility. Each deployment contributed unique technical challenges that informed system refinements and expanded the technology's application scope.

High-fidelity character asset generation for real-time rendering engines and immersive environments

Digital human replication for visual effects pipelines and performance capture integration

Morphological analysis and longitudinal documentation for medical research applications

Cultural heritage preservation through high-resolution anthropometric archiving

This research demonstrates a validated methodology for transitioning photogrammetric scanning systems from computational simulation through physical prototyping to production-scale deployment. The simulation-first approach to hardware design optimization proved effective in reducing development risk and accelerating time-to-deployment for the 87-camera DSLR array architecture.

Deployment of five operational systems processing tens of thousands of subjects validates both the technical robustness and commercial viability of the proposed architecture. The integration of Reality Capture with custom Python and Java pipeline automation, coupled with Raspberry Pi-based hardware synchronization, demonstrates a scalable framework for high-throughput human digitization.

Future research directions include investigation of real-time reconstruction algorithms leveraging neural radiance fields, extension to dynamic capture scenarios with temporal coherence constraints, and development of adaptive calibration systems for minimizing maintenance overhead in long-term deployments. The established virtual-to-physical development methodology provides a foundation for continued innovation in photogrammetric capture systems.